By Thomas Ultican 4/20/2026

School districts throughout America are being pushed to spend huge dollars to implement artificial intelligence (AI). This Silicon Valley oligarch plan is much more about profits than good education. It is true that AI has great potential but for schools the known downsides are much larger than its present benefits. Education organizations – especially K-12 – should not spend money implementing AI at this time.

I am not the only one giving this counsel. The Brookins Institute released a report this January that concludes, “At this point in its trajectory, the risks of utilizing generative AI in children’s education overshadow its benefits.” (Page 12)

The loudest and most positive commentators about AI in education all have links to tech industries. While not blatantly shilling for edtech, they present AI as inevitable and advise us about what we need to know to get it right. For example, University of San Diego’s Matt Evans wrote for the schools Professional and Continuing Education “The Role of Artificial Intelligence in Education: How Educators Can Succeed.”

The article is mostly an advertisement for the school’s AI in education master’s program. His article has several sub-headings like “Redefining Education in the Age of AI,” “The Evolving Role of Educators” and “How AI Is Reshaping Learning Institutions.” He does mention that there are some cons to AI like “Equity gaps” and “Overreliance.” In his article, Evans declares:

“We’re in a defining moment for education, where the systems we build today will influence generations of learners. To engage with AI constructively, educators and leaders must commit to continual learning and collaboration.”

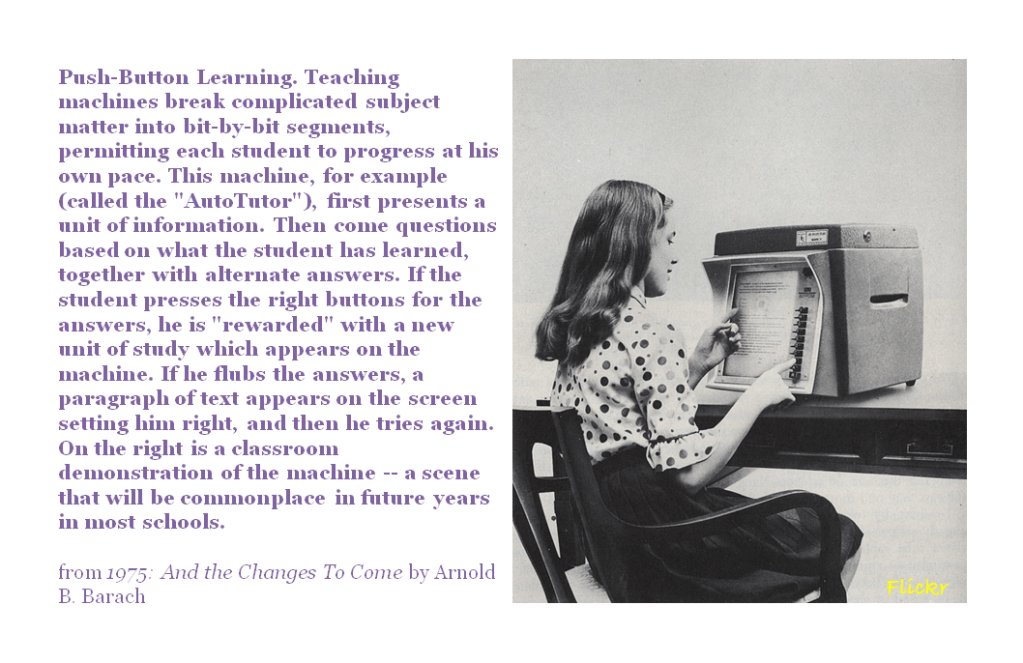

He might not be right about that. The AI pitch looks suspiciously like the same education technology song and dance bombarding schools for more than a century. The claim is education technology will personalize learning and engage kids like never before.Teaching machines are not a new idea and their hundred-year-old promises are almost identical to promises we hear about with AI today. In the forward to her book, Teaching Machines, Audrey Watters wrote:

“Education phycologists like Sidney Pressey, the person often credited with inventing the first ‘teaching machine,’ talked about using mechanical devices in the 1920s in ways almost identical to those who push for personalized learning today, all so that, as Pressey put it, a teacher could focus on her ‘real function’ in the classroom: ‘inspirational and thought-stimulating activities,’ including giving each student individualized attention.”

Algorithmic Decision-Making is Fueling Anxiety

The Dark Side of AI

Last October the Center for Democracy & Technology published “Hand in Hand: Schools’ Embrace of AI Connected to Increased Risks to Students.” It is a report based on survey data put together by Elizabeth Laird, Maddy Dwyer and Hannah Quay-de la Vallee. They opened the report:

“This report details the current status of AI use in schools along with four emerging risks associated with this technology, all of which increase the more that a school uses AI:

- Data breaches or ransomware attacks;

- Tech-enabled sexual harassment and bullying;

- AI systems that do not work as intended; and

- Troubling interactions between students and technology.” (Page 5)

The report shares, among other outcomes, the following results.

- 59% of parents believe AI is exposing children to inappropriate content. (Page 9)

- 23% of teachers reported a large-scale data breach with AI. (Page 11)

- 71% of teachers, 72% of parents and 64% of students believe AI is harming critical thinking skills and weakening key skillsets. (Page 22)

- Deepfakes and non-consensual images of 12% of students are expanding sexual harassment and bullying. (Page 39)

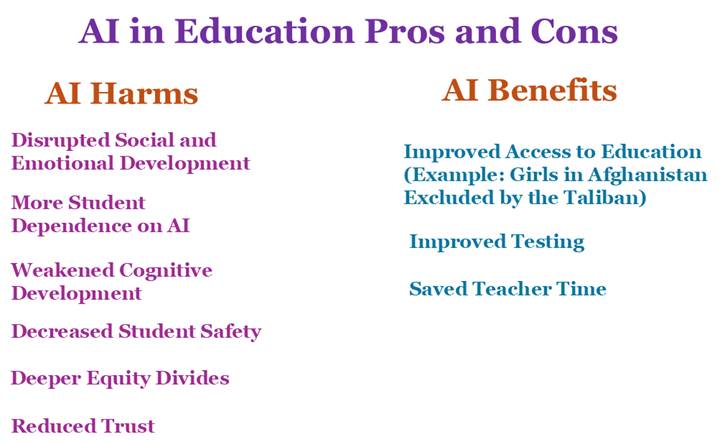

The University of Illinois posted “AI in Schools: Pros and Cons” in October, 2024.Two of the cons they cited are quite significant: “high implementation costs” and “unpredictability and inaccurate information.” The article states, “Simple generative AI systems that teachers can use in lesson planning can cost as little as $25 a month, but larger adaptive learning systems can run in the tens of thousands of dollars.” They also share, “If the data it draws from is inaccurate or biased, then the information it creates will be inaccurate or biased.”

Justin Reich wrote in the Conversation, “At MIT, I study the history and future of education technology, and I have never encountered an example of a school system – a country, state or municipality – that rapidly adopted a new digital technology and saw durable benefits for their students.”

The Brookins report made the same point as Reich. They cite a paper from the United Nations Education Scientific and Cultural Organization (2023), one by the Organization of Economic Cooperation and Development (2015) and a paper by Amy West and Hanna Ring published by The International Education Journal: Comparative Perspectives (2023). Based on these papers, they asserted that education systems investing heavily in technology did not improve teaching and reading. (Page 11)

Brookins researchers bolstered this argument stating, “The mobile broadband example illustrates this pattern— while Internet expansion correlates with economic development, a study of 2.5 million 15-year-olds from 82 countries suggests that the rollout of 3G coverage from 2000-2018 produced statistically significant declines in math, reading, and science scores, as well as students’ social relationships and sense of belonging….” (Page 11)

These findings correspond exactly with what I observed in the classroom during that same period.

The Brookins paper notes that AI tools prompt “overreliance, emotional and cognitive dependence, and diminished critical thinking.” (Page 17)

The report also points out that “AI does not possess true intelligence; it operates through statistical pattern recognition rather than reasoning or comprehension.” In the consumer world, speed and engagement are valued over safety or learning. AI frequently hallucinates presenting false or nonsensical information. The paper states, “Student-facing tools often provide an ‘illusion of impact’: assumed to be high-value but frequently modeling poor pedagogy, misunderstanding how children learn, and perpetuating rote approaches….” (Page 123)

Last year, an American Psychological Association magazine claimed, “Much of the conversation so far about AI in education centers around how to prevent cheating—and ensure learning is actually happening—now that so many students are turning to ChatGPT for help.” Two big downsides to AI include students not thinking through problems and rampant cheating.

Benjamin Riley is a uniquely free thinker. He spent five years as policy director at NewSchool Venture Fund and founded Deans for Impact. His new effort is Cognitive Resonance which recently published “Education Hazards of Generative AI.” With his background, I was surprised to learn he does not parrot the billionaire line. A year ago Riley wrote:

“Using AI chatbots to tutor children is a terrible idea—yet here’s NewSchool Venture Fund and the Gates Foundation choosing to light their money on fire. There are education hazards of AI anywhere and everywhere you might choose to look—yet organization after organization within the Philanthro-Edu Industrial Complex continue to ignore or diminish this very present reality in favor of AI’s alleged “transformative potential” in the future. The notion that AI “democratizes” expertise is laughable as a technological proposition and offensive as a political aspiration, given the current neo-fascist activities of the American tech oligarchs—yet here’s John Bailey and friends still fighting to personalize learning using AI as rocket fuel.”

Conclusion

For more than thirty years, technology companies have looked to score big in the education sector. Instead of providing useful tools, they have schemed to take control of public education. At the onset of the twenty-first century, technologists claimed that putting kids at computers was a game changer and would fix everything plaguing public schools. Then they began promoting tablets with algorithmic lessons as providing better education than a human teacher. Today’s hoax is that artificial intelligence (AI) will make all these failures work. It is not just an expensive scam; it harms both children and America’s democratic future.

Recent Comments